Software is hard

A horribly embarrassing software disaster happened on Monday. It was caused by a bad certificate, which is a mechanism used to verify and authenticate a software service.

As big as it was, I doubt anybody will lose their job over the Microsoft Teams outage even though it had an impact on millions of paying customers. [Hmmmm. Perhaps you were thinking of another software issue on Monday???]

Update: Microsoft has been having a very bad week. But you won’t see much gloating from competitors because every single one of us in this business knows that it could have been us.

ANY LARGE SYSTEM IS GOING TO BE OPERATING MOST OF THE TIME IN FAILURE MODE.

— Systemantics Quotes (@SysQuotes) November 30, 2019

Microsoft, like my own employer understands that software is incredibly complex and that even in the best possible worlds, things get missed. They know that “failure mode” is normal and that any system that presumes that there insn’t some component failing at any given time is worthless. They will review what happened, review what procedures could have prevented it from happenening, correct the errors and move on. Then in the future some even less probable failure will happen and need to be addressed. At my employer we have a formal Correction of Errors process to handle this, but most large software organizations do something similar. It is a never-ending process.

One of the keys to doing it right is understanding that blaming people doesn’t work. (Or at least rarely so.) If a person did something wrong (or failed to do something necessary) it’s because a system was missing something. If a certificate didn’t get renewed, why not? What process was in place to alert the responsible person that the certificate needed to be renewed? What automatic escalation was in place to alert their backup and/or management that the certificate was about to expire but not renewed? I have no knowledge of the details of the failure inside Microsoft, but I’m sure they’re asking a series of questions like this right now, and I suspect that somebody is updating internal dashboards and reporting systems to look at the expiration dates on certificates.

It’s not easy and it’s not cheap

It’s mostly true.

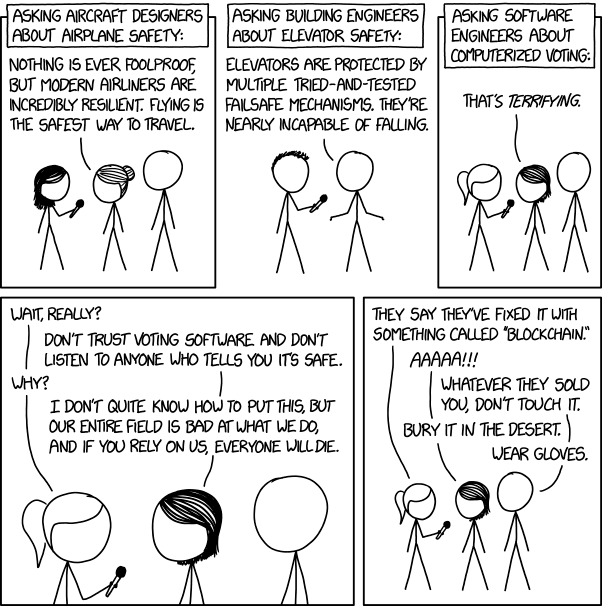

https://xkcd.com/2030/

That’s a tough place to get to, but it’s where you need to be to create reliable software. You need people who are tough and vigilant about methodically addressing every error, breaking it down to the systemic causes and making sure they’re addressed. That takes, among other things, appreciation for the difficulty, and experience dealing with that difficulty. Neither one comes cheap and neither can be provided by a bunch of insider party hacks with no relevant software experience. [Yes, I will be addressing that other outage.]

It can take many years of work building a program up to the point where it is truly robust and reliable. Some never is. We prefer to start by building something small (“minimum viable product”) and introduce it to a small group of people to test (“beta testers”) then slowly add both people and features until you have something close to the full product and market you hoped for.

Most software is built incrementally like this. It can be annoying if you’re using the app and it’s constantly being updated and changed, but that’s traded off against the ability to release something small then make small and mostly working improvements to it over time.

But this isn’t like most apps…

What if you have a one-time (or very rare) event like a flight to the moon, a scientific experiment, or a political caucus? That software has to work with all features intact and operating on the first day of real-world use. You can’t build up the features slowly. Compounding this, those one-off situations also tend to be the ones that have unusual and arcane rules that may not be fully articulated anywhere.

You can do a few things:

- If the cost of doing it manually isn’t that high, why bother with software at all? This is the question I keep asking myself about Iowa. Staff some phone banks with volunteers and have precinct leaders call in the totals. If it’s so complicated that you can’t record and tally the participation on a sheet of paper, you probably need to rethink the whole thing. Thinking that software can manage the unmanageable is always a mistake.

- Spend a huge amount of money and time up front on simulations, “game days,” and user orientation. This was the Apollo program approach. They spent a significant chunk of US GDP on simulations of the real thing because you can’t test the software in use and pop back from the moon for a few weeks when a problem comes up. Simulations can be more expensive than the event itself and require special test environments. The Apollo program designed and built special test rockets that never carried a person just to verify that the escape system worked, for example.

To put it in the context of a political caucus, this would require simulating people participating in hundreds of precincts with varying levels of wireless connectivity, using different devices, over and over until all the wrinkles are ironed out. You could use real people or machines to do the simulation, but you need to do them. In the software world we call these “game days” and we do them all the time to test that everything works as it should under real-world conditions and that all the people involved know what to do. - Find something that does part of the job, knowing you’ll need to “fill in” with a manual process. The downside is that often it’s more complex than just doing the whole thing manually, an it can introduce the problem of people working inconsistently. We often tally things in spreadsheets, for example. They’re a fine tool for all sorts of tallying, counting, adding and reporting, but their flexibility means that two different people might understand how to use them in different ways, so training and some degree of expertise becomes critical, which can make things even more complex and expensive.

- Do none of the above and hope for the best. Sadly, this happens all too often.

There are real costs and trade-offs

A launch-day app NOT failing would require a conspiracy.

This is just called development.

— SwiftOnSecurity (@SwiftOnSecurity) February 4, 2020

Doing it right is expensive as hell. Sorry to all those people who took a class and built a simple iPhone app. I did that too and it’s truly magical how much you can do with very little. But the little app I built to retrieve information from a public database didn’t have lots of concurrent users trying to do different things, and it was hitting a huge public database (IMDB) that’s scaled to handle millions of people requesting data every minute. It didn’t need to be secure. There was no log in and no verification. It didn’t need to faithfully record anything I put in to it, or to transmit that information and save it in a database somewhere out on the internet. It was great at retrieving and displaying a random Star Trek quote and that’s it. What I built could be done by a neophyte in just a few days, but that’s not the reality of most apps most people use.

Secure and robust apps can’t be built by neophytes — though I always like to have a junior or two on the team — and they’re not cheap. The Nevada Democrats paid the creator of this app $58,000 and presumably the Iowans paid about the same, so let’s call it about $120,000. At that price, they got exactly what they paid for: A cute prototype with little reliability and no security. It was at best what I’d consider a decent proof of concept that could be shown to the customer as an example of what the real thing would look like many months later. It’s been a long time since I did anything in the mobile space, but if you asked me what I would expect to pay for something like this with all the necessary features, testing and security, my rough guess would be about 20 times what Iowa and Nevada jointly paid.

The IowaReporterApp was so insecure that vote totals, passwords and other sensitive information could have been intercepted or even changed, according to officials at Massachusetts-based Veracode…

Propublica

A decent software engineer earns twice as much in a year as what this app cost. A strong software architect (which you’d want for an app requiring this level of security and reliability) can easily hit $300,000 and often will be paid more. A team of 3 people could build this app to a very high standard in a couple of months using a secure cloud provider as the backend. (Please Democrats, no more standalone servers!) Then you’d need to spend more — probably far more — on testing and security reviews. You would want to bring in an outside tester/security reviewer to have a look and like software engineers, the good ones — the ones you should trust an election to — aren’t cheap.

Too expensive? Then do it manually. Or maybe, just maybe, consider something else…

A final thought

One thing we’ve known for years is that software works best when you can simplify the process that the software is automating. Good software is often a great mechanism for revealing stupid “special cases” and one-off situations that can be easily eliminated. At my employer we talk about “invent and simplify” as a core principle. Years of experience by thousands of people have shown that we work best when we do this. Software does very well in environments where there’s a desire to do this. It fails repeatedly when complex and nonsensical rules are put into place for the software to be crafted around, then recrafted when the stupid rules get changed.

The whole point of a caucus is to impose complex and nonsensical rules designed to include some, exclude others and magnify the voices of a few. An “invent and simplified” caucus is called an election. And it’s probably time to consider having one. With hand marked paper ballots, please. No app required.